I. Manual Airbrushing

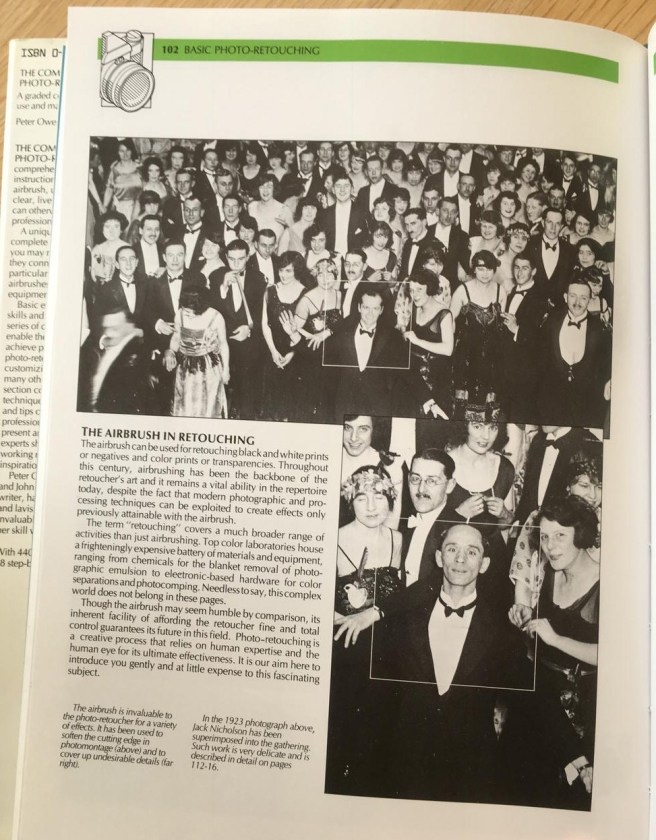

At the end of the 1980 Stanley Kubrick film The Shining (sorry! spoilers!) a photograph is revealed to show Jack Nicholson’s character, Jack Torrance, at the centre of attention at a 1921 party, which, Kubrick later said, suggests Torrance is a reincarnation of an earlier hotel caretaker. The photograph was not a simple staged photo of the extras that appear in the film, instead it was an adapted version of:

a photograph taken in 1921 which we found in a picture library. I originally planned to use extras, but it proved impossible to make them look as good as the people in the photograph. So I very carefully photographed Jack, matching the angle and the lighting of the 1921 photograph, and shooting him from different distances too, so that his face would be larger and smaller on the negative. This allowed the choice of an image size which when enlarged would match the grain structure in the original photograph. The photograph of Jack’s face was then airbrushed in to the main photograph, and I think the result looked perfect. Every face around Jack is an archetype of the period. (Kubrick interviewed by Michel Ciment between 1975 and 1987, transcribed here).

Details of this process are provided in the 1985 “Complete Airbrushing and Photo-Retouching Manual“, which I recently purchased for a penny, there not being much demand for airbrushing these days.

Photographs have never been neutral. How they are taken, framed, chosen, discarded and processed informs and literally colours our view of history, but the medium has always been tweaked and retouched to show a different sort of reality, one that we require, or other’s think we may prefer. In the case of the Shining, the manual retouching of a historic photograph provides a twist, an uncanny ambiguity to the whole movie. But since their invention, photographs have routinely been improved, manipulated, and adjusted through a variety of processes to improve their appearance, or change their content. As I said in “Digital Images for the Information Professional” back in 2008:

The defacing or erasing of historical personages, documents, artefacts, and architecture is well attested: if you control the image, you control the ideology, and the information passed on to the viewer… photographic images are very easy to manipulate, raising issues of trust, verification, and ethics when using them for proof, research, or evidence of any kind.

As well as the manual manipulation and retouching of photographs to just make people look better, which became common in the late Victorian era and found its heyday in making Hollywood starlets picture perfect, these photographic manipulation techniques were used to more chilling purposes in the USSR in the 1930s, where

The physical eradication of Stalin’s political opponents at the hands of the secret police was swiftly followed by the obliteration from all forms of pictorial existence. Photographs for publication were retouched and restructured with airbrush and scalpel to make once famous personalities vanish… So much falsification took place… that is it possible to tell the story of the Soviet era through retouched photographs… Faking photographs was probably considered one of the more enjoyable tasks of the art department of publishing houses during those times. It was certainly much subtler than the “slash-and-burn” approach of the censors. For example, with a sharp scalpel, an incision could be made along the leading edge of the image of the person or object adjacent to the one who had to be removed. With the help of some glue, the first could simply be stuck down on top of the second. Likewise, two or more photographs could be cannibalized into one using the same method. Alternatively an airbrush (an ink-jet gun powered by a cylinder of compressed air) could be used to spray clouds of ink or paint onto the unfortunate victim in the picture. The hazy edges achieved by the spray made the elimination of the subject less noticeable than crude knife-work… Skillful photographic retouching for reproduction depended, like any crafty before the advent of computer technology, on the skill of the person carrying out the task and the time she or he had to complete it. (David King, 1997, The Commissar Vanishes, Henry Holt and Company, New York, pages 9-13).

Airbrushing reigned – for good or ill – in photographic manipulation for nearly 100 years. As our 1985 manual explains

The airbrush has been in existence since 1893. During that time it has been repeatedly been denounced as a novelty, phase, or fad. It is an inarguable truth that today more airbrushes are being sold than ever before, and that owners of airbrushes are producing work in an every-increasing number of different styles. The artists themselves are guaranteeing a tremendous future for the tool, by a natural evolution of images that defy categorization… the outlook has never been more healthy. (Owen and Sutcliffe (1985) The Complete Airbrushing and Photo-Retouching Manual, North Light Books, p. 130).

An artistic manual process that required skill and training: could computers ever compare?

Few commercial activities has escaped the scare-mongering that has accompanied the rise to prominence of the computer: that, sooner, or later, the computer will take over from human ability. Airbrushing is no exception. This nation can be instantly dispelled by the fact that, despite the extraordinary advances in computer technology, no electronic process has yet been developed to fulfill satisfactorily the function of human creativity. Nor is any such development on the horizon. (ibid).

Our manual was published in 1985, and was so popular a second edition was printed in 1988. In September of that year, Adobe Systems Incorporated acquired the distribution rights to a little piece of software called Photoshop, which was released commercially in 1990. Although dedicated high-end computer systems for photo retouching had existed before this point, Photoshop (and other graphic design computer programs) democratized and expanded the use of digital retouching methods. A kick-starter funded film to be released later this year, Graphic Means, will trace this change from manual to computational methods within the design sector: we now live in a world where the manual cutting, splicing, and airbrushing seems a distant history.

II. Photoshop and filters

Fast forward twenty five years. And so everything is now digital, right? Everyone has access to digital photography retouching tools, and even “machine learning” photo changing apps! Digital photographic retouching is now all pervasive, both within the advertising industry (who often get it wrong) and by individuals, who can use a range of apps to correct, adjust, and improve, selfies for sharing on social media environments. Can’t do it yourself? The skill set is now so common, you can have someone on Fiverr retouch your photographs for you for minimal cost (and some people even make social commentary art work out of it). The days of manual tweaking of photographs are over! Except. The tools currently available for photographic adjustment still require levels of skill and expertise to use. The range of filters and tools are dazzling, but they still require a human operator to do the retouching, and to drive the machine, to do bespoke, one-off adjustments (such as would be required in a digital retouching of our Shining pic). Even the fancy filters du jour which are sold as machine learning, such as Prisma, are very blunt tools, and require some level of selection, input, operation, and request from an app user. The filters may be more and more advanced, but they a) have limited, fixed variables b) still require a level of human intervention and b) automated filter processes only tweak the appearance, not the semantic content of the photograph. Zomg! I’ve been Prisma-ed! Machine learning, dontchaknow!

So much, so fun. But exchanging (rather than just filtering) someone’s face in a historic photograph, a la the Shining, still requires someone sitting down and working on making the photographic content look realistic, even though the tools have changed from the manual, to the digital. Surely, this will always be the case, right? Despite the extraordinary advances in computer technology, no electronic process has yet been developed to fulfill satisfactorily the function of human creativity. Nor is any such development on the horizon. I seem to have heard that somewhere before…

III. Enter the Robots

Earlier this year, I had the good fortune to attend a symposium at the Royal Society’s country estate, the topic of which was Imaging in Graphics, Vision and Beyond. The aim of the seminar was to bring together researchers in disciplines spanning computer graphics, computer vision, cultural heritage, remote sensing and bio-photonics to discuss interdisciplinary approaches and scope out new research areas. I was there along with UCL’s Tim Weyrich given our work on the Great Parchment Book. It was a great two days, and not just for the academic craic (my room was THE OLD LIBRARY! it was glorious).

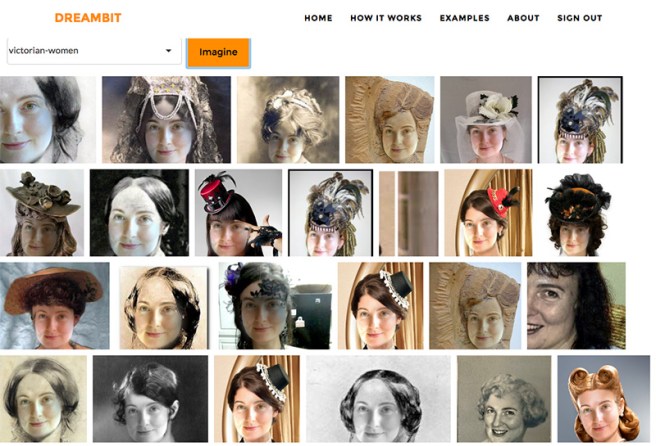

The paper that made me sit up most and go… here come the awesome robots… was from Dr Ira Kemelmacher-Shlizerman, Assistant Professor of Computer Science and Engineering who co-leads the UW Graphics and Imaging Laboratory at the University of Washington. Ira demonstrated a personalised image search engine designed to show you different potential views of people. Give it an input of a picture of a face, and a text query to find photos, and it outputs results of pictures that automatically include the person submitted embedded into the photographs. Let me give you an example (Ira has given me permission to share these). First she takes the input picture:

The search term used is “1930s”, and bingo: Ira as film star, seamlessly integrated automatically into the historical photographic record.

The new system, called Dreambit, analyzes the input photo and searches for a subset of photographs available online that match it for shape, pose, and expression, automatically synthesizing them based on their team’s previous work on facial processing and three-dimensional reconstruction, modeling people from massive unconstrained photo collections. You can keep your Prisma: here is machine learning at its cutting edge best. More details about how the system works are available from the recent SIGGRAPH 2016 paper where it was launched (hefty 45MB download), and you can sign up for Free Beta Access for when Dreambit is launched, hopefully later in the year, here.

The potential market applications for this are huge (it has been described as a system for trying out different hair styles, but one can also imagine using this for creating bespoke gifts, especially greetings cards: who needs a generic sepia historical humour card when you can slot a pic of a you and a loved one into the picture, for larks?). But what interests me is what this means for institutions and collections creating digitised historical photographic archives, and where, conceptually, this is taking us in understanding how historic photographs can be used, reused, and re-appropriated in the digital realm. You would not have to go to a picture library now and manually tweak and burn and dodge a physical print of a photograph to include it in a film: we’ll soon be able to have computer systems available to do that seamlessly for us.

IV. We need to talk about What This Means for Digitisation of the Photographic Record

I’m not sure I’ve really conceptualised what this means for historic photographic archives in the online era yet. There are clearly copyright and licensing issues at play, which is ever a concern in the library and archive community, but beyond that: what does this mean for those in the sector? We’ve barely got out head around how historic photographs lose their metadata or any sense of accreditation or even factual accuracy when they go off into the internet wilds on their own, or how historical photograph content can be monetised in ways institutions never envisaged, never mind what happens when the content starts getting tweaked and rewritten, automatically, swiftly, robotically, changing its very content as well as its context. Are we ready for the robots entering the digitisation landscape? What fun can we have with this – as well as what worries does it bring? (I can imagine various public engagement apps, where Dreambit is applied to particular photographic collections: is this best done with an institution’s permission, or will it happen anyway in the internet wilds, if collections don’t play along?) There are also ethical issues at play about the reuse and appropriation of historical and cultural content: what can we do to educate both other researchers and the general public about the ramifications of these technologies, as applied to the historical photographic record?

We’ve come a long way from the physical photographic processes needed to put someone else into the picture. Now we need to think about how we can use this emergent technology to work alongside and with our digitised content, to retain any kind of control over institutional digital collections. I’ll be really interested in what discussions this provokes – and what the worries, and benefits of the technology, can be viewed to be. It would be wise to start thinking of how we can use collections in this content-changing world, rather than build false barriers to access that we may not be able to maintain.

I find Dreambit’s potential amazing. I’ve asked Ira if she could put my picture into the one used at the end of the Shining. I’m sure it will now only take the click of a button.

Update: 22nd August 2016: Ira put me in the picture…

One thought on “From airbrush to filters to AI…The Robots Enter the Photographic Archive”